This is the first article in the miniseries that deals with macros. I originally planned on treating this topic in my upcoming Elixir in Action book, but decided against it because the subject somehow doesn’t fit into the main theme of the book that is more focused on the underlying VM and crucial parts of OTP.

Instead, I decided to provide a treatment on macros here. Personally, I find the subject of macros very interesting, and in this miniseries I’ll try to explain how they work, providing some basic techniques and advices on how to write them. While I’m convinced that writing macros is not very hard, it certainly requires a higher level of attention compared to plain Elixir code. Thus, I think it’s very helpful to understand some inner details of Elixir compiler. Knowing how things tick behind the scene makes it easier to reason about the meta-programming code.

This will be a medium-level difficulty text. If you’re familiar with Elixir and Erlang, but are still somewhat confused about macros, then you’re in the right place. If you’re new to Elixir and Erlang, it’s probably better to start with something else, for example the Getting started guide, or one of the available books.

Meta-programming

Chances are you’re already somewhat familiar with meta-programming in Elixir. The essential idea is that we have a code that generates code based on some input.

Owing to macros we can write constructs like this one from Plug:

get "/hello" do

send_resp(conn, 200, "world")

end

match _ do

send_resp(conn, 404, "oops")

endor this from ExActor:

defmodule SumServer do

use ExActor.GenServer

defcall sum(x, y), do: reply(x+y)

end

In both cases, we are running some custom macro in compile time that will transform the original code to something else. Calls to Plug’s get and match will create a function, while ExActor’s defcall will generate two functions and the code that properly propagates arguments from the client process to the server.

Elixir itself is heavily powered by macros. Many constructs, such as defmodule, def, if, unless, and even defmacro are actually macros. This keeps the language core minimal, and simplifies further extensions to the language.

Related, but somewhat less known is the possibility to generate functions on the fly:

defmodule Fsm do

fsm = [

running: {:pause, :paused},

running: {:stop, :stopped},

paused: {:resume, :running}

]

for {state, {action, next_state}} <- fsm do

def unquote(action)(unquote(state)), do: unquote(next_state)

end

def initial, do: :running

end

Fsm.initial

# :running

Fsm.initial |> Fsm.pause

# :paused

Fsm.initial |> Fsm.pause |> Fsm.pause

# ** (FunctionClauseError) no function clause matching in Fsm.pause/1Here, we have a declarative specification of an FSM that is (again in compile time) transformed into corresponding multi-clause functions.

The similar technique is for example employed by Elixir to generate String.Unicode module. Essentially, this module is generated by reading UnicodeData.txt and SpecialCasing.txt files where codepoints are described. Based on the data from this file, various functions (e.g. upcase, downcase) are generated.

In either case (macros or in-place code generation), we are performing some transformation of the abstract syntax tree structure in the middle of the compilation. To understand how this works, you need to learn a bit about compilation process and AST.

Compilation process

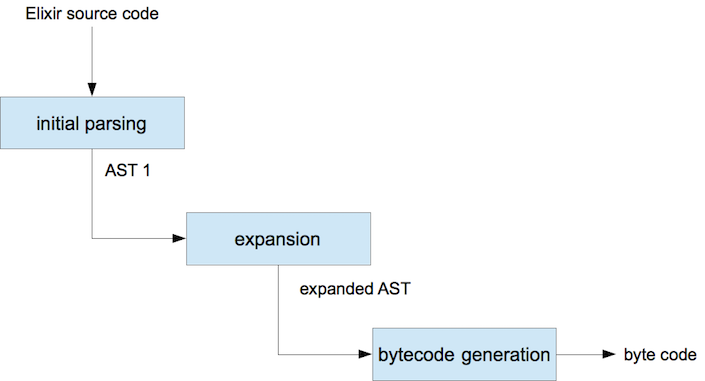

Roughly speaking, the compilation of Elixir code happens in three phases:

The input source code is parsed, and a corresponding abstract syntax tree (AST) is produced. The AST represents your code in form of nested Elixir terms. Then the expansion phase kicks off. It is in this phase that various built-in and custom macros are called to transform the input AST into the final version. Once this transformation is done, Elixir can produce final bytecode - a binary representation of your source program.

This is just an approximation of the process. For example, Elixir compiler actually generates Erlang AST and relies on Erlang functions to transform it into a bytecode, but it’s not important to know exact details. However, I find this general picture helpful when reasoning about meta-programming code.

The main point to understand is that meta-programming magic happens in the expansion phase. The compiler initially starts with an AST that closely resembles your original Elixir code, and then expands it to the final version.

Another important takeaway from this diagram is that in Elixir, meta-programming stops after binaries are produced. Except for code upgrades or some dynamic code loading trickery (which is beyond the scope of this article), you can be sure that your code is not redefined. While meta-programming always introduces an invisible (or not so obvious) layer to the code, in Elixir this at least happens only in compile-time, and is thus independent of various execution paths of a program.

Given that code transformation happens in compile time, it is relatively easy to reason about the final product, and meta-programming doesn’t interfere with static analysis tools, such as dialyzer. Compile-time meta-programming also means that we get no performance penalty. Once we get to run-time, the code is already shaped, and no meta-programming construct is running.

Creating AST fragments

So what is an Elixir AST. It is an Elixir term, a deep nested hierarchy that represents a syntactically correct Elixir code. To make things clearer, let’s see some examples. To generate an AST of some code, you can use quote special form:

iex(1)> quoted = quote do 1 + 2 end

{:+, [context: Elixir, import: Kernel], [1, 2]}Quote takes an arbitrarily complex Elixir expression and returns the corresponding AST fragment that describes that input code.

In our case, the result is an AST fragment describing simple sum operation (1+2). This is often called a quoted expression.

Most of the time you don’t need to understand the exact details of the quoted structure, but let’s take a look at this simple example. In this case our AST fragment is a triplet that consists of:

-

An atom identifying the operation that will be invoked (

:+) - A context of the expression (e.g. imports and aliases). Most of the time you don’t need to understand this data

- The arguments (operands) of the operation

The main point is that quoted expression is an Elixir term that describes the code. The compiler will use this to eventually generate the final bytecode.

Though not very common, it is possible to evaluate a quoted expression:

iex(2)> Code.eval_quoted(quoted)

{3, []}The result tuple contains the result of the expression, and the list of variable bindings that are made in that expression.

However, before the AST is somehow evaluated (which is usually done by the compiler), the quoted expression is not semantically verified. For example, when we write following expression:

iex(3)> a + b

** (RuntimeError) undefined function: a/0

We get an error, since there’s no variable (or function) called a.

In contrast, if we quote the expression:

iex(3)> quote do a + b end

{:+, [context: Elixir, import: Kernel], [{:a, [], Elixir}, {:b, [], Elixir}]}

There’s no error. We have a quoted representation of a+b, which means we generated the term that describes the expression a+b, regardless of whether these variables exist or not. The final code is not yet emitted, so there’s no error.

If we insert that representation into some part of the AST where a and b are valid identifiers, this code will be correct.

Let’s try this out. First, we’ll quote the sum expression:

iex(4)> sum_expr = quote do a + b endThen we’ll make a quoted binding expression:

iex(5)> bind_expr = quote do

a=1

b=2

end

Again, keep in mind that these are just quoted expressions. They are simply the data that describes the code, but nothing is yet evaluated. In particular, variables a and b don’t exist in the current shell session.

To make these fragments work together, we must connect them:

iex(6)> final_expr = quote do

unquote(bind_expr)

unquote(sum_expr)

end

Here we generate a new quoted expression that consists of whatever is in bind_expr, followed by whatever is in sum_expr. Essentially, we produced a new AST fragment that combines both expressions. Don’t worry about the unquote part - I’ll explain this in a bit.

In the meantime, we can evaluate this final AST fragment:

iex(7)> Code.eval_quoted(final_expr)

{3, [{{:a, Elixir}, 1}, {{:b, Elixir}, 2}]}

Again, the result consists of the result of an expression (3) and bindings list where we can see that our expression bound two variables a and b to the respective values of 1 and 2.

This is the core of meta-programming approach in Elixir. When meta-programming, we essentially compose various AST fragments to generate some alternate AST that represents the code we want to produce. In doing so, we’re most often not interested in the exact contents or structure of input AST fragments (the ones we combine). Instead, we use quote to generate and combine input fragments and generate some decorated code.

Unquoting

This is where unquote comes into play. Notice that whatever is inside the quote block is, well, quoted - turned into an AST fragment. This means we can’t normally inject the contents of some variable that exists outside of our quote. Looking at the example above, this wouldn’t work:

quote do

bind_expr

sum_expr

end

In this snippet, quote simply generates quoted references to bind_expr and sum_expr variables that must exist in the context where this AST will be interpreted. However, this is not what we want in our case. What we need is a way of directly injecting contents of bind_expr and sum_expr to corresponding places in the AST fragment we’re generating.

That’s the purpose of unquote(…) - the expression inside parentheses is immediately evaluated, and inserted in place of the unquote call. This in turn means that the result of unquote must also be a valid AST fragment.

Another way of looking at unquote is to treat it as an analogue to string interpolation (#{}). With strings you can do this:

"... #{some_expression} ... "Similarly, when quoting you can do this:

quote do

...

unquote(some_expression)

...

endIn both cases, you evaluate an expression that must be valid in the current context, and inject the result in the expression you’re building (either string, or an AST fragment).

It’s important to understand this, because unquote is not a reversal of a quote. While quote takes a code fragment and turns it into a quoted expression, unquote doesn’t do the opposite. If you want to turn a quoted expression into a string, you can use Macro.to_string/1.

Example: tracing expressions

Let’s combine this theory into a simple example. We’ll write a macro that can help us in debugging the code. Here’s how this macro can be used:

iex(1)> Tracer.trace(1 + 2)

Result of 1 + 2: 3

3

The Tracer.trace takes a given expression and prints it’s result to the screen. Then the result of the expression is returned.

It’s important to realize that this is a macro, which means that input expression (1 + 2) will be transformed into something more elaborate - a code that prints the result and returns it. This transformation will happen in the expansion time, and the resulting bytecode will contain some decorated version of the input code.

Before looking at the implementation, it might be helpful to imagine the final result. When we call Tracer.trace(1+2), the resulting bytecode will correspond to something like this:

mangled_result = 1+2

Tracer.print("1+2", mangled_result)

mangled_result

The name mangled_result indicates that Elixir compiler will somehow mangle all temporary variables we’re introducing in our macro. This is also known as the macro hygiene, and we’ll discuss later in this series (though not in this article).

Given this template, here’s how the macro can be implemented:

defmodule Tracer do

defmacro trace(expression_ast) do

string_representation = Macro.to_string(expression_ast)

quote do

result = unquote(expression_ast)

Tracer.print(unquote(string_representation), result)

result

end

end

def print(string_representation, result) do

IO.puts "Result of #{string_representation}: #{inspect result}"

end

endLet’s analyze this code one step at a time.

First, we define the macro using defmacro. A macro is essentially a special kind of function. It’s name will be mangled, and this function is meant to be invoked only in the expansion phase (though you could theoretically still call it in run-time).

Our macro receives a quoted expression. This is very important to keep in mind - whichever arguments you send to a macro, they will already be quoted. So when we call Tracer.trace(1+2), our macro (which is a function) won’t receive 3. Instead, the contents of expression_ast will be the result of quote(do: 1+2).

In line 3, we use Macro.to_string/1 to compute the string representation of the received AST fragment. This is the kind of thing you can’t do with a plain function that is called in runtime. While its possible to call Macro.to_string/1 in runtime, the problem is that we don’t have an access to AST anymore, and therefore don’t know what is the string representation of some expression.

Once we have a string representation, we can generate and return the resulting AST, which is done from the quote do … end construct. The result of this is the quoted expression that will substitute the original Tracer.trace(…) call.

Let’s look at this part closer:

quote do

result = unquote(expression_ast)

Tracer.print(unquote(string_representation), result)

result

end

If you understood the explanation of unquote then this is reasonably simple. We essentially inject the expression_ast (quoted 1+2) into the fragment we’re generating, taking the result of the operation into the result variable. Then we print this together with the stringified expression (obtained via Macro.to_string/1), and finally return the result.

Expanding an AST

It is easy to observe how this connects in the shell. Start the iex shell and copy-paste the definition of the Tracer module above:

iex(1)> defmodule Tracer do

...

end

Then, you must require the Tracer module:

iex(2)> require Tracer

Next, let’s quote a call to trace macro:

iex(3)> quoted = quote do Tracer.trace(1+2) end

{{:., [], [{:__aliases__, [alias: false], [:Tracer]}, :trace]}, [],

[{:+, [context: Elixir, import: Kernel], [1, 2]}]}

Now, this output looks a bit scary, and you usually don’t have to understand it. But if you look close enough, somewhere in this structure you can see a mention of Tracer and trace, which proves that this AST fragment corresponds to our original code, and is not yet expanded.

Now, we can turn this AST into an expanded version, using Macro.expand/2:

iex(4)> expanded = Macro.expand(quoted, __ENV__)

{:__block__, [],

[{:=, [],

[{:result, [counter: 5], Tracer},

{:+, [context: Elixir, import: Kernel], [1, 2]}]},

{{:., [], [{:__aliases__, [alias: false, counter: 5], [:Tracer]}, :print]},

[], ["1 + 2", {:result, [counter: 5], Tracer}]},

{:result, [counter: 5], Tracer}]}

This is now the fully expanded version of our code, and somewhere inside it you can see mentions of result (the temporary variable introduced by the macro), and the call to Tracer.print/2. You can even turn this expression into a string:

iex(5)> Macro.to_string(expanded) |> IO.puts

(

result = 1 + 2

Tracer.print("1 + 2", result)

result

)

The point of all this is to demonstrate that your macro call is really expanded to something else. This is how macros work. Though we only tried it from the shell, the same things happen when we’re building our projects with mix or elixirc.

I guess this is enough for the first session. You’ve learned a bit about the compiler process and the AST, and seen a fairly simple example of a macro. In the next installment, I’ll dive a bit deeper, discussing some mechanical aspects of macros.

Comments